The main downside of the service is that it does not work on all sites, and that it works only for as long as the developer keeps the service operational. Image Extractor is a useful online service to download one, multiple or all images from many sites on the Internet. A browser extension or a desktop program such as Bulk Image Downloader work better in those cases, as these don't have the restriction. when you need to verify that you are 18 years of age or older to view the content. A quick test revealed that it works fine on many sites, but not on sites that display warnings or prompts, e.g.

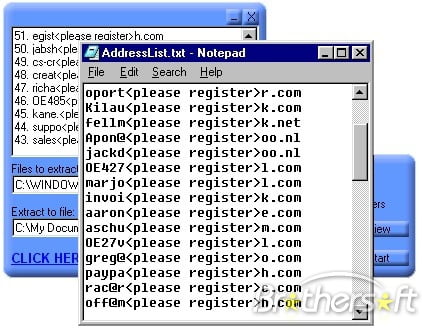

This browser then navigates to the website you entered and detects all the images (visible and invisible).Īccording to the information on the site, the service works with dynamic websites and single page applications. The developer describes how the service works in the following paragraph:Įverytime you start the extraction process the server spins up a new instance of the Google Chrome browser. The downloaded website can be browsed by opening one of the HTML. Website Downloader arranges the downloaded site by the original websites relative link-structure. You find several options beneath each image: a download button, a zoom icon, an option to copy the URL, and to invert the background. Website Downloader, Website Copier or Website Ripper allows you to download websites from the Internet to your local hard drive on your own computer. Thumbnail images, filenames, image types, and the resolution of each image are displayed when you scroll the available images. Individual images are downloaded in their native format, but multiple images as a zip archive that the site creates on the fly. Once you have made the selection, hit the download selected button to download all selected images to the local system. Images can be selected individually or in bulk by activating the "select all" button. Sorting and filtering options are provided at the top these are useful if lots of images have been returned. Image Extractor parses the specified address and displays the list of images that it found on the results page. Paste or type an URL into the form field on the site and hit the extract-button to start the process. Jut visit the main website to get started. Image Extractor is an online service, which means that you don't need to download a program or extension to use its functionality. This tool will extricate all the meta tags of the webpage and Lists them with the information. URL is also known as Uniform Resource Locator. The extracted web addresses are displayed in simple list. Soup = BeautifulSoup(grab.text, 'html.Browser extensions, like the popular DownThemAll extension, and programs, like Bulk Image Downloader, support downloading any number of images from websites. What is URL Extractor This tool supports extraction of URLs, Meta Tags and Images from a web page. Or, if you want to list all the problematic URLs on the sites: import requests With open('links_good.txt', 'w') as f, open('links_need_update.txt', 'w') as g: The same code with those problems fixed: import requests you compile a regex for efficieny, but do so on every iteration, countering the effect.you loop over a list using an index, that's not needed, loop over urls directly.you open file handles, but never close them, use with instead.# if there's no result, the link doesn't match what we need, so write it and stop searching you rewrite the files on every iteration of your for loop, so you only get the final resultįixing all that (and using a few arbitrary URLs that work): import requestsĬheck_url = re.compile('|').you write the URL to the file every time you find a problem, but you only need to write it to the file if it has a problem at all or perhaps you wanted a full lists of all the problematic links?.the regex has some issues (you probably don't want to repeat that first URL and the parentheses are pointless).not all elements will have an href, so you need to deal with those, or your script will fail.

if invalid = None is missing a : at the end, but should also be if invalid is None:.There are some very basic problems with your code: Soup = BeautifulSoup(grab.text, 'html.parser')Ĭheck_url = re.compile(r'(| (invalid = check_url.search(data) I'd appreciate any pointers to achieve this (I'm new at this, can you tell) import requests Below is my script but it doesn't look right. I'm essentially trying to sort the pages based on the links in the pages. I have the script set up to write two files - pages with links in the given domains are written to one file while the rest are written to the other. Comparison Of Top 5 Email Extraction Tools. I'm trying to write a script that iterates through a list of web pages, extracts the links from each page and checks each link to see if the are in a given set of domains. What You Will Learn: Email Extraction Apps For Lead Generation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed